20 minutes of discussion about innovation.

How can large companies innovate? from Andreessen Horowitz on Vimeo.

20 minutes of discussion about innovation.

How can large companies innovate? from Andreessen Horowitz on Vimeo.

A bit of a throughback (first posted on my blog here), but here’s a 3 minute parody video from 2007 about the impending tech bubble. Fun, but not very accurate.

My friend and former colleague Corey Snipes has been working to get a Bootstrapper’s meetup off the ground in Denver. This is a small group (limited to 12, I believe) of people who are building products (typically software) and self funding. I believe most of the members are in the solopreneur mode (I know Corey is).

I imagine this kind of support group would be fantastic–certainly I had a similar group when I was a consultant in the past, and bouncing ideas off of others in similar situations made the struggle much easier.

I’ve not made this meetup yet because, a) I’m not sure I’m bootstrapping (and you know what, if you aren’t sure you’re bootstrapping, you aren’t bootstrapping!), b) I live in Boulder and Boulderites have a hard time leaving the Boulder Bubble, and c) Wednesdays in general are tough days for me to do anything outside of the house.

If you are a bootstrapper in the Denver area, take a look.

I was browsing Hacker News the other day, and ran across this article, lamenting how difficult it was to support a company with an open source project and that insomuch as one could, consulting generated far more revenue than selling SaaS services like hosting. For the record, I’ve never touched LocomotiveCMS. From a brief glance, it looks nice.

While I feel for them, I think that they have alternatives:

In my comment on the HN post, I talk about how products often face a “round peg in an elliptical hole” problem. I meant that products often solve 80% of the problem for 80% of the users. They also require users to change their processes (more crystallization). Typically there’s just enough offset that people feel cognitive drag. (Of course, the same thing usually happens with custom solutions, you just don’t know that until you are done. Doh!)

Especially in crowded markets, like CMSes, it is far far easier to sell enough hours to make a living customizing a solution than it is to sell enough products to make a living. Brennan Dunn covers this ground well. Every consulting company I’ve ever seen or been a part of, and every consultant I’ve ever known (except the ones who were contracting for one client and really were employees with more flexibility), dreams of transitioning from non scalable consulting by the hour to scalable product sales. One friend even had a name for it–the “von MacIntyre machine”, which would make money while he slept.

But it’s hard.

27 minutes on profit models from Derek Sivers.

Two minutes of analysis on how business model innovations happens in new companies and old companies.

I originally wrote this in Dec of 2004. I still think that having someone who can answer engineers’ questions authoritatively increases productivity (of the engineer). However, now I’d emphasize that developers need to spend some time learning their domain to gain some intuition, and truly great business software engineers will learn when to bump a question up to a business person and when their intuition can be trusted.

————————————————————-

Back in college, when I took first year physics lab, there was a section of the course that focused on teaching the difference between precision and accuracy in measurement. This distinction was crucial in experimental physics, since measurement is the bedrock of such experimentation. Basically, precision is how many digits of a measurement actually mean something. If I’m measuring the length of a room with my stride (and found it to be 30 feet long), the precision is less than if I were to measure the length of the room with a tape measure (and found it to be 33 feet, 6 and ¾ inches long). However, it’s possible that the stride measurement is more accurate than the length found with the tape measure, that is, it reflects how long the room actually is. (Perhaps there’s clothing on the floor which adds tape measurement, but which I stride over.)

These concepts aren’t just valid in physics; I think they’re also useful in software. When building a piece of software, I am precise if I build what I say I am going to build, and I am accurate if what I build actually meets the client’s business needs, that is, it solves the business problem. Almost every development tool either makes development more precise or more accurate.

The concept of precision lends itself easily to automation. For example, unit testing is rapidly gaining credence as a useful software technique. With unit testing, a developer writes test cases for each part of their code (often at the method level). The running of these tests ensures that code is actually doing what the developer thinks it is doing. I like writing unit tests; it gives me comfort to know that corner cases are taken care of and that changes to code can be fairly easily regression tested. Other techniques besides unit testing that help ensure precision include:

Round tripping: using a tool like TogetherJ, I can ensure that the model (often described in UML) and the code are in sync. This makes it easier for me to verify my mental model against the code.

Specification writing: The more precise a spec is, the easier it is to translate into code.

Compilers: the checking that occurs at compilation time can be very helpful in ensuring that the code is doing what I think it is doing–at a very low level. Obviously, this technique depends on the language used.

Now, precision is needed, because if I am not confident that I understand what the code is doing, then I’m in real trouble. However, accuracy is much more important. Having a customer onsite is a great example of a technique to ensure accuracy: you have a business domain expert available all the time for developers’ questions. In this situation, when a developer stumbles across a part of the business problem that they don’t quite understand, the don’t do what developers normally do (in order of decreasing accuracy):

Instead, they have a real live business person, to whom this software really matters (hopefully), who they can ask. Doing this makes it much more likely that the final solution will actually solve the business problem. Other techniques to help improve accuracy include:

Issue tracking software (I use Bugzilla): Having a place where questions and conversations are recorded is truly helpful in making sure the mental model of the business user and the programmer are in sync. Using a web based tool means that non-technical users can participate and contribute.

Specification writing: A well written spec allows both the business user and developer to have a sense of what is being built, which means that the business user can correct invalid notions at an early stage. However, if a spec is too detailed, it can be used to justify precision at the cost of accuracy (‘hey, the code does exactly what’s specified’ is the excuse you’ll hear).

Spring and other dependency injection tools, as well as IDEs: These tools help accuracy by decreasing the costs of changing code.

Precision and accuracy are both important in software engineering. Perhaps the best way to characterize the two concepts is that precision is the mapping of the programmer’s model of the problem to the computer’s model, whereas accuracy is the mapping of the business’ needs to the programmer’s model. However, though both are needed, accuracy is much harder to obtain. Knowing that I’m building precisely what I think I’m building is beneficial only insofar as what I think I’m building is actually what the customer needs.

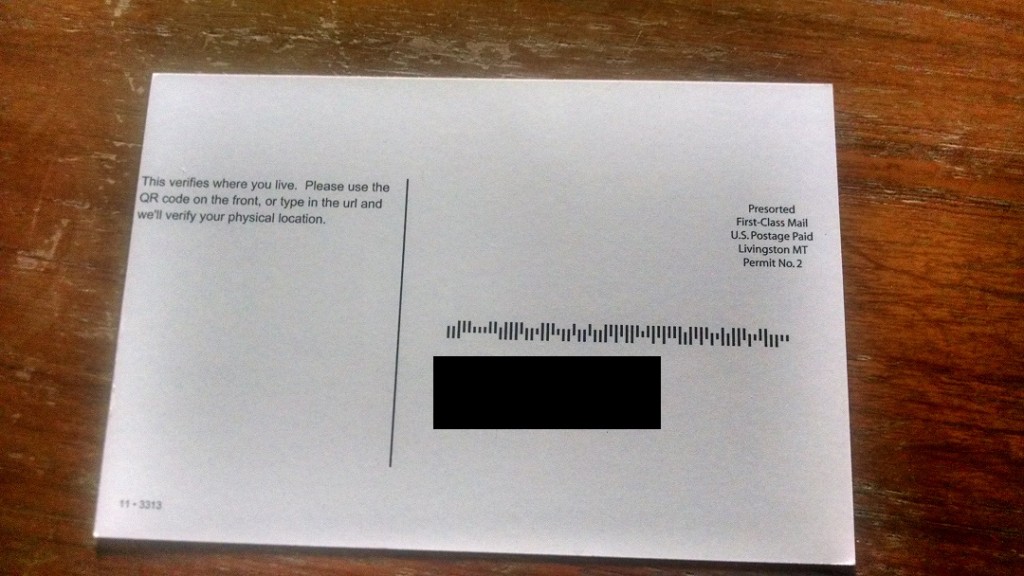

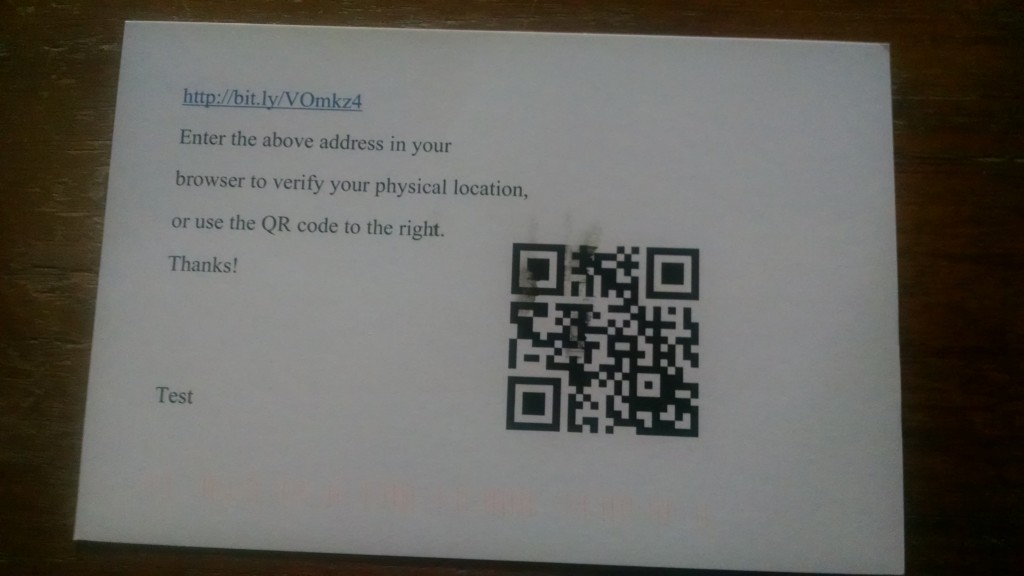

A few months ago, I wrote a Zapier app to integrate with the Lob postcard API. I actually spent the 94 cents to get a postcard delivered to me (I paid 24 cents too much, as Lob has now dropped their price). The text of the postcard doesn’t really matter, but it was an idea I had to offer a SaaS that would verify someone lived where they said they lived, using postal mail. Here are the front and back of the postcard (address is blacked out).

Here is the PDF that Lob generated from both a PDF file I generated for the front (the QR code was created using this site) and a text message for the back.

A few observations about the postcard.

So, if I were going to use Lob for production, I would send a few more test mailings and make sure that the smudge was a one off and not a systemic issue. I would definitely generate PDFs for both the front and back sides–the control you have is worth the hassle. Luckily, there are many ways to generate a PDF nowadays (including, per Atwood’s Law, javascript). I also would not use it for time sensitive notifications. To be fair, any postal mail has this limitation. For such notifications, services like Twilio or email are better fits.

In the months since I discovered Lob, I’ve been looking for a standalone business case. However, business needs that are:

seem pretty few and far between. You can see a short discussion I kicked off on hackernews. However, they’ve raised plenty of money, so they don’t appear to be going anywhere soon.

But the non-standalone business cases for direct postcard mail are numerous (just look in your mailbox).

Via Fred Wilson, here’s a 50 minute discussion with Marc Andreesen (of much fame) about entrepreneurship, tech trends and more.

This is a repost from over a decade ago, about how software coalesces and defines business processes. The post is a little rough (“computerizing tasks”?), but hey, I’d only been blogging for months.

The ideas are sound, though. The longer I’ve been around this industry, the more the ideas in this post are reinforced.

————————————————————-

I’m in the process of helping a small business migrate an application that they use from Paradox back end to a PostgreSQL back end. The front end will remain written in Paradox. (There are a number of reasons for this–they’d like to have a more robust database, capable of handling more users. Also, Paradox is on the way out. A google search doesn’t turn up any pages from corel.com in the top 10. Ominous?)

I wrote this application a few years ago. Suffice it to say that I’ve learned a lot since then, and wish I could rectify a few mistakes. But that’s another post. What I’d really like to talk about now is how computer programs crystallize business processes.

Business processes are ‘how things get done.’ For instance, I write software and sell it. If I have a program to write, I specify the requirements, get the client to sign off on them (perhaps with some negotiation), design the program, code the program, test it, deploy it, make changes that the client wants, and maintain it. This is a business process, but it’s pretty fluid. If I need to get additional requirements specification after design, I can do that. Most business processes are fluid, with a few constraints. These constraints can be positive: I need to get client sign off (otherwise I won’t get paid). Or they can be negative: I can’t program .NET because I don’t have Visual Studio.NET, or I can’t program .NET because I don’t want to learn it.

Computerizing tasks can make processes much, much easier. Learning how to mail merge can save time when invoicing, or sending out those ever impressive holiday gift cards. But everything has its cost, and computerizing processes is no different. Processes become harder to change after a program has been written or installed to ‘help’ with them. For small businesses, such process engineering is doubly calcifying, because few folks have time to think about how to make things better (they’re running just as fast as they can to stay in place) and also because computer expertise is at a premium. (Realizing this is a fact and that folks will take a less technically excellent solution if it’s maintainable by normal people is what has helped MicroSoft make so much money. The good is the enemy of the best and if you can have a pretty good solution for one quarter of the price of a perfect solution, most folks will choose the first.)

So, what happens? People, being more flexible than computers, adjust themselves to the process, which, in a matter of months or years, may become obsolete. It may not do what they need it to do, or it may require them to do extra labor. However, because it is a known constraint and it isn’t worth the investment to change, it remains. I’ve seen cruft in computer programs (which one friend of mine once declared was nothing but condensed business knowledge), but I’m also starting to realize that cruft exists in businesses too. Of course, sweeping away business process cruft assumes the same risks as getting rid of code cruft. There are costs to getting rid of the unneeded processes, and the cost of finding the problems, fixing them, documenting them, and training employees on the new ones, may exceed the cost of just putting up with them.

“A computer lets you make more mistakes faster than any invention in human history – with the possible exceptions of handguns and tequila.” -Mitch Ratcliffe, Technology Review, April 1992

A computer, for the virtue of being able to instantaneously recall and process vast amounts of data, also crystallizes business processes, making it harder to change them. In additional to letting you make mistakes quickly, it also forces you to let mistakes stand uncorrected.